Executive Summary

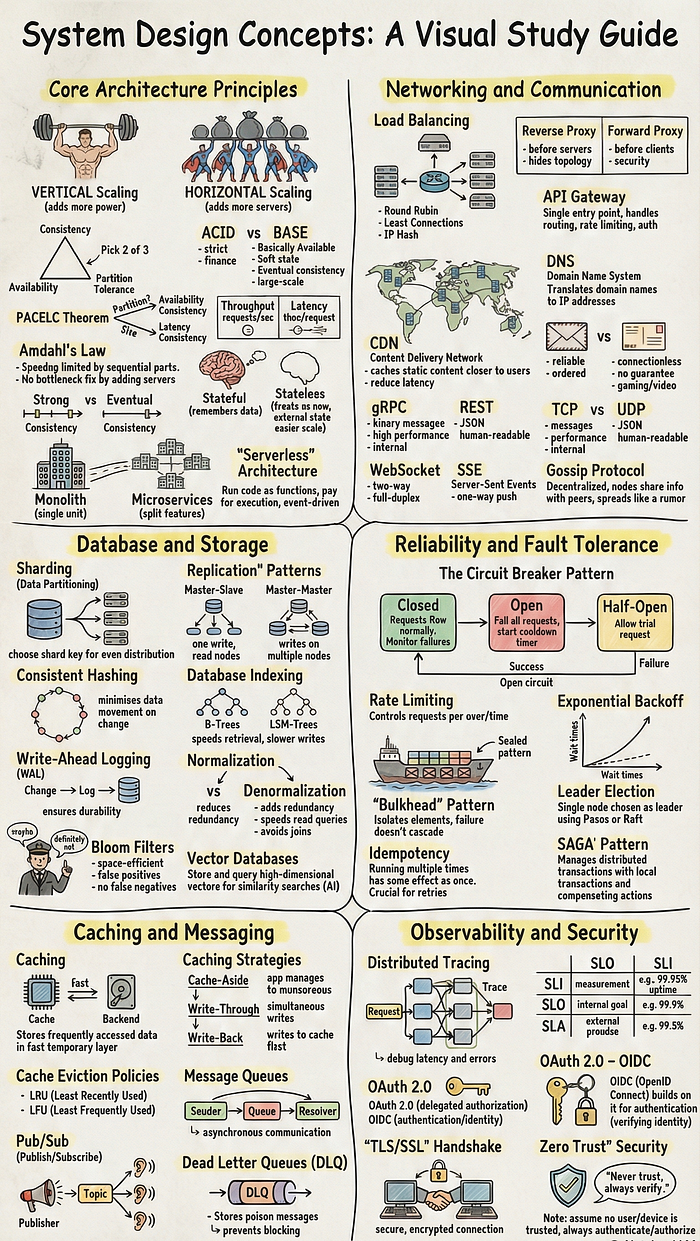

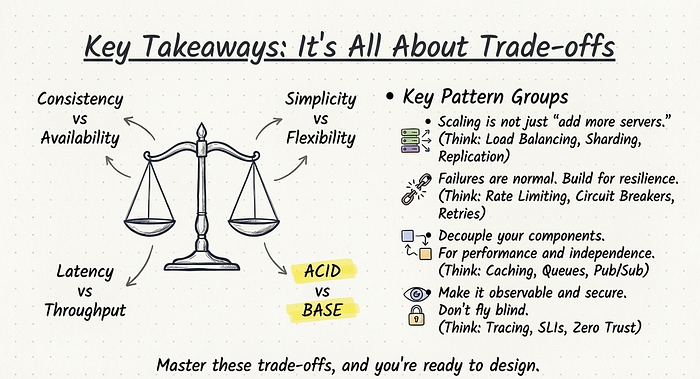

This document synthesizes 50 fundamental concepts in system design, drawing from a comprehensive guide on the subject. The core insight is that effective system design is an exercise in managing trade-offs, particularly between consistency and availability, latency and throughput, and simplicity versus flexibility. Successful scaling extends beyond merely adding servers; it necessitates a deep understanding of load balancing, data sharding, replication, and bottleneck identification.

Get Rishabh Maheshwari’s stories in your inbox

Join Medium for free to get updates from this writer.

Reliability in distributed systems is not an accident but a deliberate architectural choice, achieved through patterns like rate limiting, circuit breakers, retries, and bulkheads, which are designed to handle expected failures gracefully. Performance and decoupling are significantly enhanced by tools such as caching, message queues, and publish-subscribe models, though these introduce their own complexities regarding data consistency and message ordering. Finally, modern systems must be built with observability and security as primary concerns, incorporating distributed tracing, service level indicators (SLIs), robust authentication (OAuth/OIDC), data-in-transit encryption (TLS), and a Zero Trust security posture to ensure they are not only performant but also safe, secure, and debuggable.

I. Core Architecture Principles

This section outlines the foundational principles and architectural choices that govern how systems are structured, scaled, and managed.

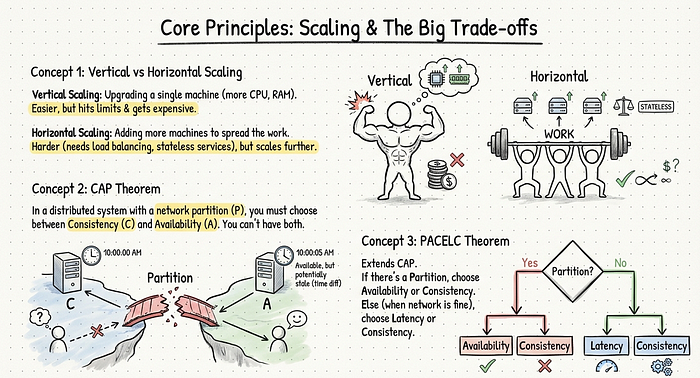

Vertical vs. Horizontal Scaling

- Vertical Scaling: Involves upgrading a single machine by adding more CPU, RAM, or faster storage. It is simpler to implement but is constrained by hardware limits and becomes progressively more expensive. The analogy provided is a single superhero getting stronger.

- Horizontal Scaling: Involves adding more machines and distributing the workload across them. While more complex, requiring load balancing, stateless services, and shared storage, it offers greater scalability. The analogy is building a team of superheroes.

CAP Theorem

- The CAP Theorem states that in a distributed system experiencing a network partition, it is impossible to simultaneously guarantee both Consistency and Availability.

- Consistency: Every user sees the same data at the same time.

- Availability: The system always provides a response, even if the data may be temporarily out of date.

- A system must choose which of these two guarantees to sacrifice during a network failure.

PACELC Theorem

- PACELC is an extension of the CAP theorem. It posits that: if there is a Partition, a system must choose between Availability and Consistency; Else (in normal operation), it must choose between Latency and Consistency.

- This theorem clarifies that even without network failures, systems face a trade-off between fast, eventually consistent reads (lower latency) and slower, strongly consistent reads (higher consistency).

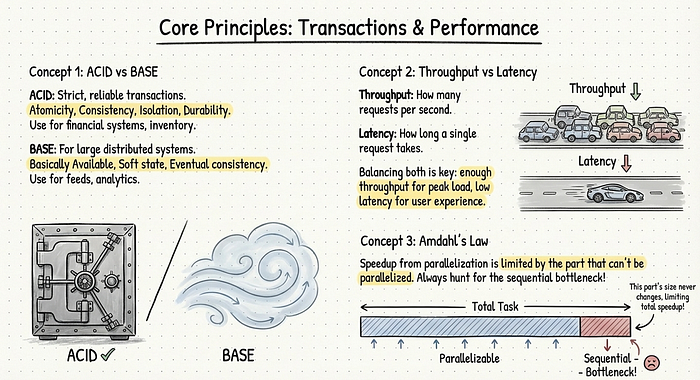

ACID vs. BASE

- ACID (Atomicity, Consistency, Isolation, Durability): A set of properties for strict, reliable database transactions. It is essential for systems where data integrity is paramount, such as financial or inventory management systems.

- BASE (Basically Available, Soft state, Eventual consistency): An alternative model for large-scale distributed systems that prioritize high availability and rapid response times. BASE systems may exhibit temporary inconsistencies that resolve over time.

- Many modern architectures employ a hybrid approach, using ACID for critical transactional flows and BASE for less critical functions like activity feeds or analytics.

Throughput vs. Latency

- Throughput: The number of requests a system can process per unit of time (e.g., requests per second).

- Latency: The time taken to process a single request from start to finish.

- These two metrics are often in opposition; increasing throughput by processing more work in parallel can lead to queue buildup and increased latency for individual requests. Effective system design seeks to balance both for an optimal user experience.

Amdahl’s Law

- This law states that the potential performance improvement from parallelization is limited by the portion of the system that must remain sequential.

- If a part of a process is inherently non-parallelizable (e.g., a final step that must hit a single master database), that part will become the ultimate bottleneck, capping overall performance regardless of how many more resources are added.

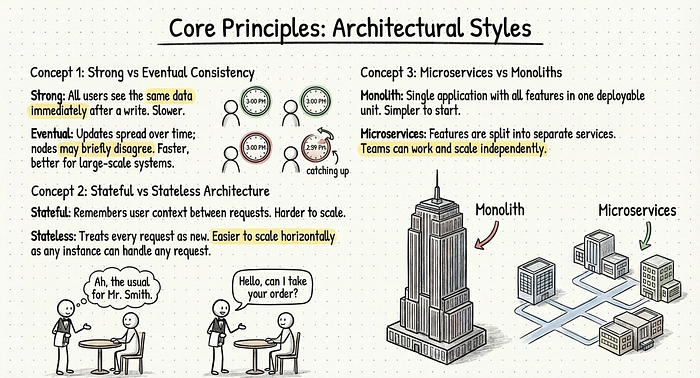

Strong vs. Eventual Consistency

- Strong Consistency: Guarantees that all users see the same data immediately following a write operation. It is simpler to reason about but can be slower and less available during failures.

- Eventual Consistency: Allows for a brief period where different nodes in a distributed system may have different versions of the data. Updates propagate through the system over time. This model is suited for large-scale applications where immediate consistency is not critical, such as social media timelines.

Stateful vs. Stateless Architecture

- Stateful Service: Remembers user-specific context or session data between requests, often storing it locally. This can simplify application logic but complicates scaling, load balancing, and failover.

- Stateless Service: Treats every request as new and self-contained, relying on external storage (e.g., databases, caches) for any required state. Stateless services are easier to scale horizontally, as any server instance can handle any request.

Microservices vs. Monoliths

- Monolith: A single, unified application where all features are contained within one deployable unit. Monoliths are simpler to develop and deploy initially.

- Microservices: An architectural style that splits application features into small, independent services that communicate over a network. This approach allows teams to work independently and scale different components separately but introduces complexity in communication, debugging, and data management.

- A common evolutionary path is to start with a monolith and gradually break it apart into microservices as the system grows and its pain points become clear.

Serverless Architecture

- Also known as “Functions as a Service” (FaaS), serverless architecture allows developers to run small, event-driven functions in the cloud without managing the underlying server infrastructure.

- Advantages: Pay-per-use pricing and automatic scaling handled by the cloud provider. Ideal for workloads with spiky traffic like webhooks, background jobs, or simple APIs.

- Trade-offs: Can involve “cold starts” (initial latency), less control over long-running tasks, and potentially higher costs at sustained high volumes.

II. Networking and Communication

This section covers the protocols, patterns, and components used to manage traffic and facilitate communication between different parts of a system.

Load Balancing

- Function: Distributes incoming network traffic across multiple servers to prevent any single server from becoming a bottleneck.

- Benefits: Improves both system performance and reliability, as the failure of one server does not bring down the entire application.

- Implementation: Can be a hardware appliance or a software service. Load balancers typically use health checks to avoid sending traffic to unresponsive servers.

Load Balancing Algorithms

- Round Robin: Distributes requests to servers sequentially in a circular order. Simple but does not account for server load or request complexity.

- Least Connections: Sends new requests to the server with the fewest active connections. This is effective when requests have varying completion times.

- IP Hash: Uses a hash of the client’s IP address to determine which server receives the request. This provides a basic form of “session stickiness,” ensuring a user is consistently routed to the same server.

Reverse Proxy vs. Forward Proxy

- Reverse Proxy: Sits in front of a group of servers, intercepting client requests and forwarding them to the appropriate backend server. It can handle tasks like TLS termination, caching, compression, and routing, while hiding the internal network topology.

- Forward Proxy: Sits in front of clients, forwarding their requests to the internet. It is often used for security, content filtering, or caching within a corporate or private network.

API Gateway

- An API Gateway is a specialized reverse proxy that serves as the single entry point for all API calls in a microservices architecture.

- Responsibilities: Handles routing, rate limiting, authentication, logging, and response transformation.

- Benefit: Simplifies the client-side by providing a single, unified endpoint.

- Risk: Can become a bottleneck or a “mini monolith” if too much business logic is embedded within it.

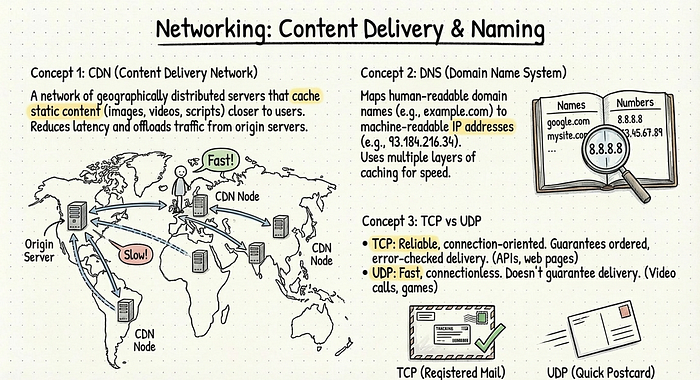

CDN (Content Delivery Network)

- A CDN is a geographically distributed network of proxy servers that cache static assets (images, videos, CSS, JavaScript) close to end-users.

- Function: When a user requests content, the request is routed to the nearest CDN node, dramatically reducing latency.

- Benefits: Offloads traffic from origin servers, improves front-end performance, and increases application scalability and resilience.

DNS (Domain Name System)

- DNS is the system that translates human-readable domain names (e.g., www.example.com) into machine-readable IP addresses (e.g., 192.0.2.1).

- It operates with multiple layers of caching for fast lookups and can be used for basic load balancing by returning different IP addresses for the same domain name.

TCP vs. UDP

- TCP (Transmission Control Protocol): A connection-oriented protocol that guarantees reliable, ordered, and error-checked delivery of data. It is suitable for applications where data integrity is critical, such as web browsing, file transfers, and APIs.

- UDP (User Datagram Protocol): A connectionless protocol that is faster and has less overhead than TCP but does not guarantee delivery or order. It is well-suited for real-time applications like video streaming and online gaming, where speed is more important than perfect reliability.

HTTP/2 and HTTP/3 (QUIC)

- HTTP/2: Improved upon HTTP/1.1 by introducing request multiplexing over a single TCP connection, header compression, and server push, all aimed at reducing latency.

- HTTP/3: Further enhances performance by running over QUIC (a transport protocol built on UDP), which reduces connection setup time and performs better on unreliable networks with packet loss.

gRPC vs. REST

- REST: An architectural style that typically uses HTTP and JSON. It is resource-oriented, human-readable, and widely adopted for public-facing APIs.

- gRPC: A high-performance RPC framework that uses HTTP/2 for transport and Protocol Buffers (protobuf) for binary serialization. It is smaller and faster than REST/JSON and supports features like bidirectional streaming, making it a popular choice for internal service-to-service communication in microservices architectures.

WebSocket and Server-Sent Events (SSE)

- WebSockets: Provide a persistent, full-duplex (two-way) communication channel between a client and a server over a single TCP connection. Ideal for real-time interactive applications like chat, collaborative editing, and multiplayer games.

- SSE: A simpler protocol that allows a server to push updates to a client over a one-way channel using standard HTTP. It is suitable for use cases where only the server needs to send data, such as live news feeds or stock tickers.

Long Polling

- A technique that simulates server-push functionality over standard HTTP. The client sends a request to the server, which holds the connection open until it has new data to send or a timeout occurs. Upon receiving a response, the client immediately initiates a new request.

- It is less efficient than WebSockets but is easier to implement and compatible with older proxies and firewalls.

Gossip Protocol

- A decentralized communication protocol where nodes in a distributed system share information by periodically exchanging data with random peers.

- Information propagates through the network “like gossip,” ensuring that all nodes eventually converge on a consistent view without a central coordinator. It is highly fault-tolerant and used for service discovery, health monitoring, and state dissemination in large clusters.

III. Database and Storage Internals

This section details the techniques and technologies used to manage data at scale, focusing on partitioning, replication, indexing, and transactional integrity.

Sharding (Data Partitioning)

- Definition: The process of splitting a large database into smaller, more manageable pieces called shards, with each shard residing on a separate machine.

- Goal: To scale database storage capacity and throughput horizontally.

- Strategies: Include range-based, hash-based, and directory-based sharding.

- Challenge: Choosing an effective shard key is crucial to avoid “hot spots,” where one shard receives a disproportionate amount of traffic.

Replication Patterns

- Definition: The practice of keeping multiple copies of data on different nodes to improve availability and read performance.

- Master-Slave (Primary-Replica): One node (the master) handles all write operations, which are then replicated to one or more slave nodes that can serve read requests.

- Master-Master (Multi-Primary): Multiple nodes can accept write operations, and they synchronize data with each other. This increases write availability but introduces complexity in resolving write conflicts.

Consistent Hashing

- A hashing technique designed to minimize data re-shuffling when nodes are added to or removed from a distributed system (like a cache or database).

- Both keys and nodes are mapped to a logical ring. A key is assigned to the first node encountered moving clockwise on the ring. This ensures that when a node is added or removed, only a small, adjacent set of keys needs to be remapped.

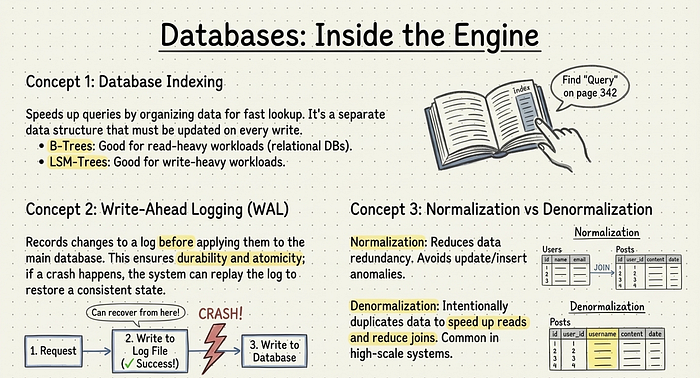

Database Indexing

- Purpose: Indexes are data structures that improve the speed of data retrieval operations on a database table at the cost of slower writes and increased storage space.

- B-Trees: Balanced tree structures common in relational databases. They keep data sorted and are efficient for both point lookups and range queries.

- LSM (Log-Structured Merge) Trees: Optimize for high write throughput by batching writes in memory and periodically flushing them to sorted files on disk. Reads can be more complex as they may need to check multiple files.

Write-Ahead Logging (WAL)

- A standard method for ensuring data durability and atomicity. Before any changes are applied to the database itself, they are first recorded in a sequential log file on durable storage.

- In the event of a system crash, the database can replay the log to recover to a consistent state, preventing data corruption from partially completed transactions.

Normalization vs. Denormalization

- Normalization: The process of organizing data in a relational database to minimize redundancy and improve data integrity by dividing larger tables into smaller, well-structured ones.

- Denormalization: The intentional introduction of redundancy by duplicating data across multiple tables. This is often done in high-scale systems to optimize read performance by avoiding expensive join operations.

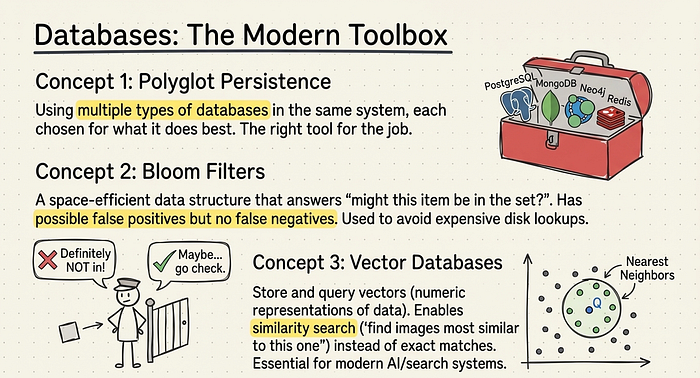

Polyglot Persistence

- The practice of using multiple different database technologies within a single application, choosing the best tool for each specific job.

- An application might use a relational database for transactional data, a document store for unstructured content, a key-value store for caching, and a graph database for relationship-heavy data. This adds operational complexity but allows for optimized performance and functionality.

Bloom Filters

- A probabilistic, space-efficient data structure used to test whether an element is a member of a set.

- It can produce false positives (it might incorrectly say an element is in the set) but never false negatives (if it says an element is not in the set, it is definitively not).

- They are used to avoid expensive lookups for items that are likely not present, such as checking a cache before querying a database.

Vector Databases

- Specialized databases designed to store, manage, and query high-dimensional vector embeddings, which are numerical representations of data like text or images.

- They excel at similarity searches using distance metrics (e.g., cosine similarity), enabling applications like semantic search, recommendation engines, and other AI-powered features.

IV. Reliability and Fault Tolerance

This section explores patterns and strategies for building resilient systems that can withstand and recover from failures.

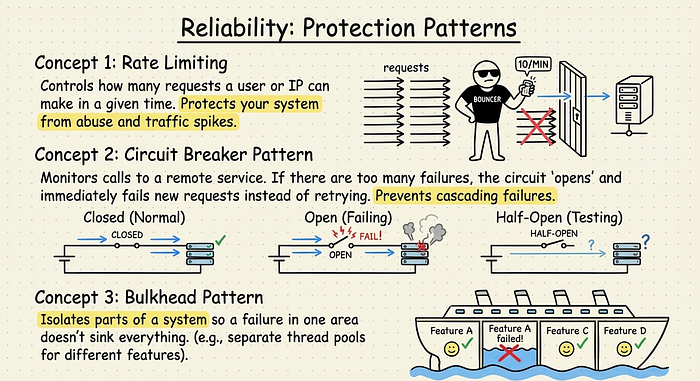

Rate Limiting

- Function: Controls the frequency of requests a user or client can make to an API or service within a specific time window.

- Purpose: Protects backend services from abuse, accidental overload, and denial-of-service attacks.

- Strategies: Common algorithms include fixed window, sliding window, and token bucket.

Circuit Breaker Pattern

- A pattern that prevents an application from repeatedly trying to execute an operation that is likely to fail.

- Mechanism: A circuit breaker monitors calls to a downstream service. If the number of failures exceeds a threshold, the breaker “opens,” and subsequent calls fail immediately without attempting to contact the service. After a timeout, the breaker enters a “half-open” state to test if the service has recovered.

Bulkhead Pattern

- An application design pattern that isolates system elements into pools so that if one fails, the others can continue to function.

- Named after the partitioned sections of a ship’s hull, this pattern can be implemented by using separate thread pools or connection pools for different services, preventing a failure in one area from cascading and taking down the entire system.

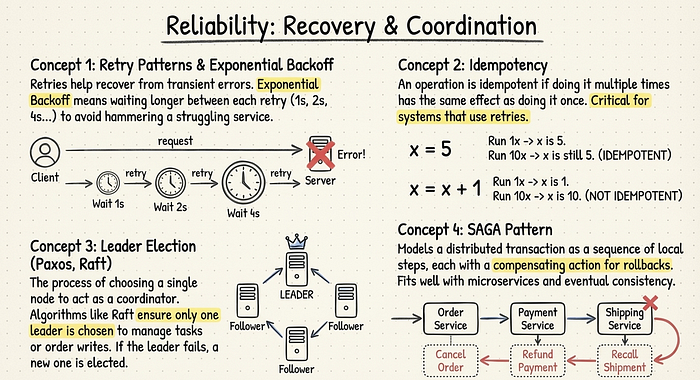

Retry Patterns and Exponential Backoff

- Retries: A mechanism for handling transient failures by automatically re-attempting a failed operation.

- Exponential Backoff: A crucial enhancement to retries where the delay between attempts increases exponentially (e.g., 1s, 2s, 4s). This prevents a client from overwhelming a struggling service with rapid-fire retries. Adding “jitter” (a small random delay) is also recommended to avoid synchronized retry storms.

Idempotency

- An operation is idempotent if it can be performed multiple times with the same result as performing it once. For example, setting a value is idempotent, while incrementing a counter is not.

- Idempotency is critical in distributed systems where network failures can lead to retries, ensuring that a re-sent request does not cause unintended side effects like duplicate transactions.

Heartbeat

- A periodic signal sent from a node or service to a monitoring system to indicate it is alive and functioning correctly.

- If the monitoring system stops receiving heartbeats from a node, it can assume the node has failed and trigger a failover process.

Leader Election

- The process in a distributed system by which a single node is chosen to assume a special role, such as a coordinator or primary for writes.

- Consensus algorithms like Paxos and Raft provide fault-tolerant mechanisms to ensure that all nodes agree on a single leader and can elect a new one if the current leader fails.

Distributed Transactions (SAGA Pattern)

- The SAGA pattern is a way to manage data consistency across multiple microservices without using traditional two-phase commit locks.

- A transaction is structured as a sequence of local transactions, each with a corresponding compensating action. If any step fails, the compensating actions are executed in reverse order to undo the preceding steps, thus maintaining overall consistency.

Two-Phase Commit (2PC)

- A protocol used to achieve atomic transactions across multiple distributed nodes.

- Phase 1 (Prepare): A coordinator asks all participating nodes if they are ready to commit.

- Phase 2 (Commit/Abort): If all participants vote “yes,” the coordinator instructs them to commit. If any vote “no” or fail to respond, the coordinator instructs all to roll back.

- 2PC provides strong consistency but is prone to blocking if the coordinator fails and can be a performance bottleneck.

V. Caching and Messaging

This section describes key technologies for improving performance and decoupling system components through in-memory data storage and asynchronous communication.

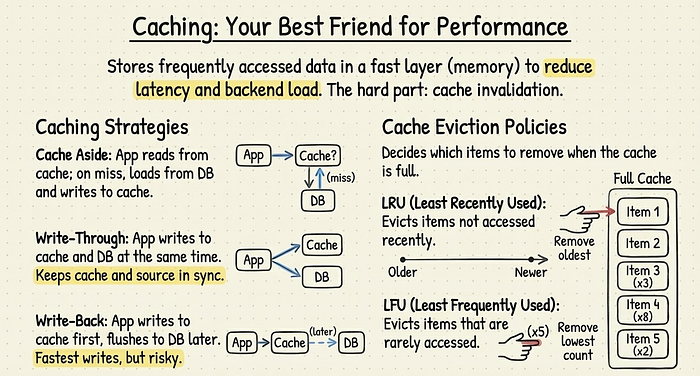

Caching

- Definition: Storing copies of frequently accessed data in a fast, temporary storage layer (typically memory) to serve future requests more quickly.

- Benefits: Reduces latency for end-users and decreases the load on backend systems like databases.

- Challenge: The primary difficulty with caching is “cache invalidation” — ensuring that stale data is removed or updated when the source data changes.

Caching Strategies

- Cache-Aside: The application is responsible for managing the cache. It first checks the cache; on a miss, it reads data from the database, then writes that data into the cache for future requests.

- Write-Through: The application writes data to the cache and the database simultaneously. This ensures the cache is always consistent with the database but adds latency to write operations.

- Write-Back: The application writes data only to the cache, which acknowledges the write immediately. The data is then flushed to the database asynchronously at a later time. This offers very low write latency but risks data loss if the cache fails before the data is persisted.

Cache Eviction Policies

- LRU (Least Recently Used): When the cache is full, the item that has been accessed least recently is removed.

- LFU (Least Frequently Used): When the cache is full, the item that has been accessed the fewest times is removed.

- Other policies include FIFO (First-In, First-Out) and random replacement. The choice of policy depends on the application’s access patterns.

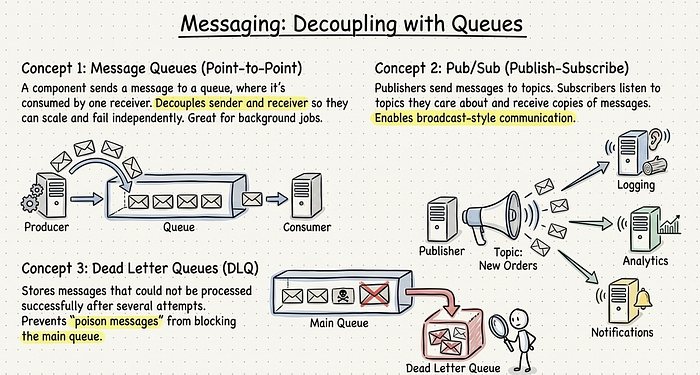

Message Queues (Point-to-Point)

- A message queue enables asynchronous communication between services. A “producer” sends a message to a queue, and a “consumer” retrieves it for processing at a later time.

- Each message is typically processed by only one consumer. This pattern decouples the sender and receiver, allowing them to operate and scale independently. It is commonly used for background jobs.

Pub/Sub (Publish-Subscribe)

- A messaging pattern where “publishers” send messages to a “topic” without knowledge of the “subscribers.” Any number of subscribers can listen to a topic and receive a copy of every message sent to it.

- This enables one-to-many, broadcast-style communication and is central to event-driven architectures.

Dead Letter Queues (DLQ)

- A secondary queue used to store messages that could not be processed successfully after a certain number of retries.

- Moving “poison messages” to a DLQ prevents them from blocking the main processing queue. Engineers can later inspect the DLQ to diagnose and resolve the underlying issues.

VI. Observability and Security

This section covers essential concepts for monitoring system health, understanding behavior, and implementing robust security measures.

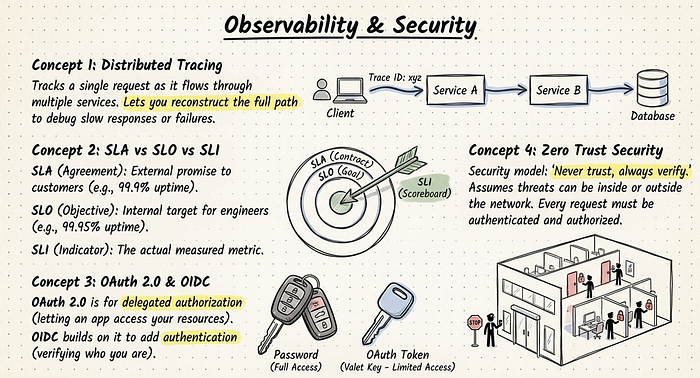

Distributed Tracing

- A method for monitoring and profiling applications, especially those built using a microservices architecture.

- It tracks a single request as it travels through multiple services, assigning a unique trace ID that allows developers to visualize the entire request path, identify bottlenecks, and debug cross-service issues.

SLA vs. SLO vs. SLI

- SLA (Service Level Agreement): A formal contract with a customer that defines the level of service they can expect, often with financial penalties for non-compliance (e.g., “99.9% uptime”).

- SLO (Service Level Objective): An internal target for system reliability that is stricter than the SLA. This is the goal that engineering teams strive to meet.

- SLI (Service Level Indicator): The actual, quantitative metric used to measure compliance with an SLO (e.g., the success rate of HTTP requests). The SLI is the “scoreboard” that measures performance.

OAuth 2.0 and OIDC

- OAuth 2.0: An authorization framework that allows a user to grant a third-party application limited access to their resources on another service without sharing their credentials.

- OIDC (OpenID Connect): A thin layer built on top of OAuth 2.0 that adds an authentication component. It allows an application to verify a user’s identity and obtain basic profile information. Together, they form the foundation of modern “Login with…” features.

TLS/SSL Handshake

- TLS (Transport Layer Security)/SSL (Secure Sockets Layer): Cryptographic protocols that provide secure communication over a computer network.

- The handshake is the initial process where the client and server establish a secure connection. During the handshake, they agree on an encryption cipher, exchange cryptographic keys, and authenticate the server via its digital certificate.

Zero Trust Security

- A security model based on the principle of “never trust, always verify.” It assumes that threats can originate from anywhere, both inside and outside the network perimeter.

- In a Zero Trust architecture, every request must be authenticated, authorized, and encrypted, regardless of its origin. Access is granted based on user identity and device posture, not on network location.

You can think of system design like running a professional restaurant. Vertical scaling is buying a bigger stove, while horizontal scaling is hiring a whole team of chefs. Load balancing is the host at the front door assigning customers to different tables so no waiter is overwhelmed. A CDN is like having pre-made snacks available at local convenience stores so people don’t have to travel to your main kitchen for everything. Finally, Circuit Breakers are like a safety fuse in the kitchen: if one appliance starts smoking, it cuts the power immediately to that section so the whole restaurant doesn’t burn down.

Fonte: https://medium.com/@MaheshwariRishabh/50-core-system-design-concepts-6828ed73c2e8