Prompt

Defina todos os componentes de Inventory do LeanIX, para o contexto:

1. Capacidade Principal: Gestão de Documentos

2. Capacidade Secundária: Armazenar documento, Efetuar OCR, Identificar Campos e Identificar metadados

3. Aplicações: AWS Textract, AWS Recognition, DynamoDB, API Gateway, Lambda e AWS S3

4. IT Component: DynamoDB, Java e Typescript

Não se limite aos itens identificados acima. Eles são uma base para desenvolver um Roadmap completo de Inventory no LeanIX.

Gemini

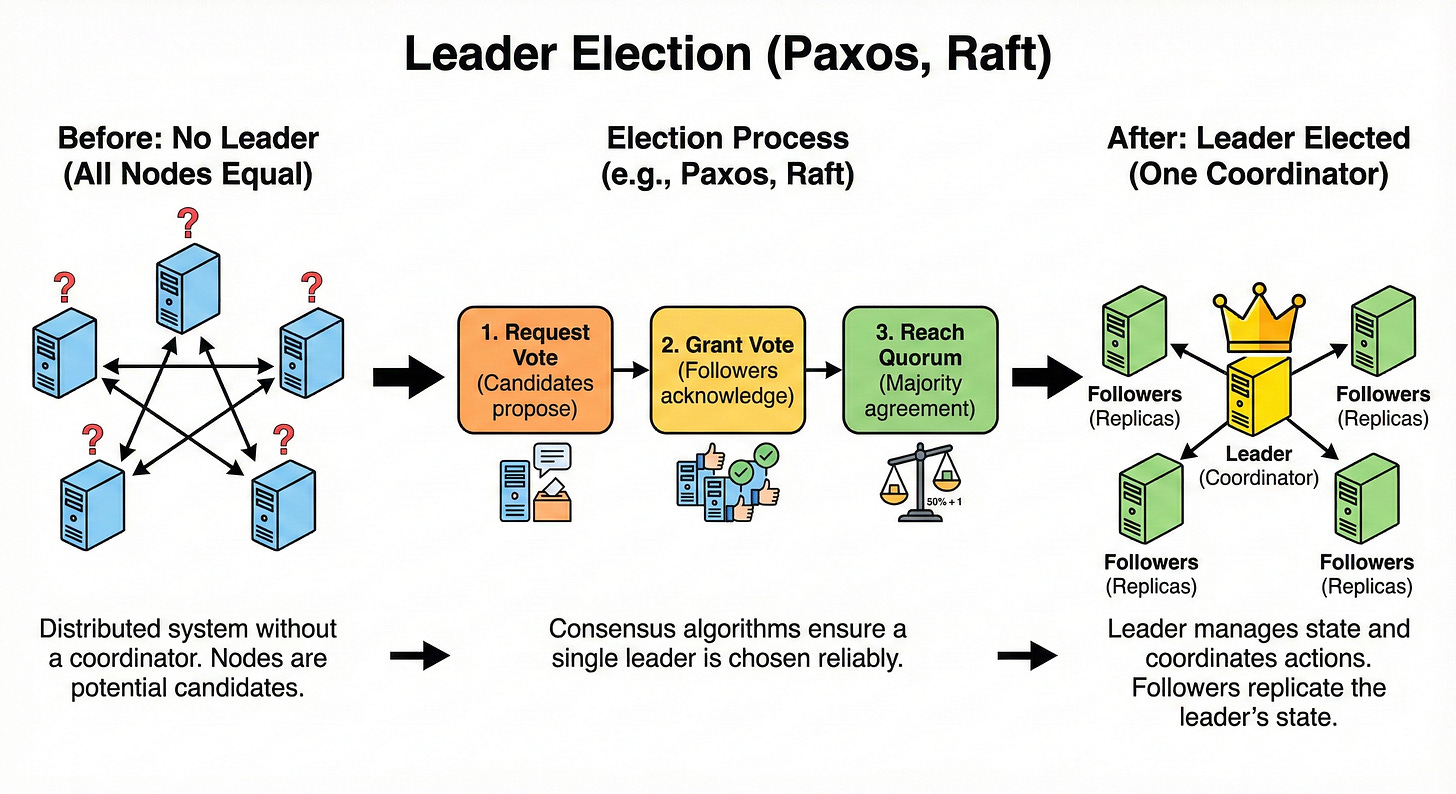

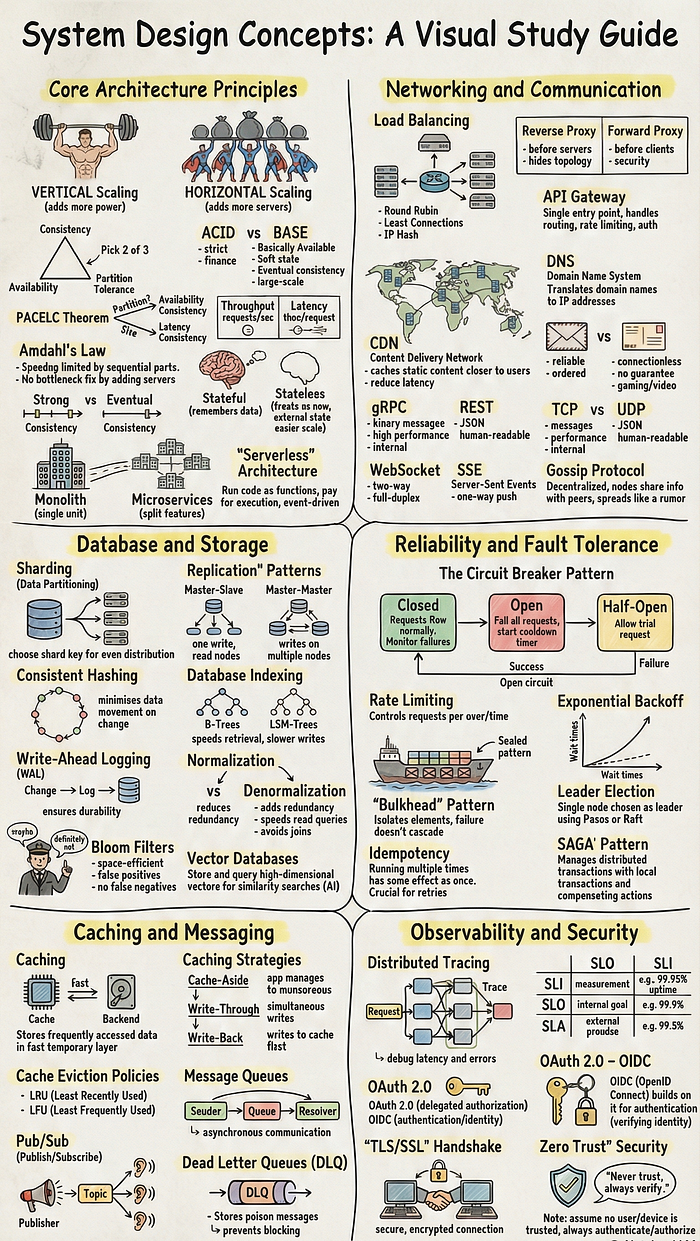

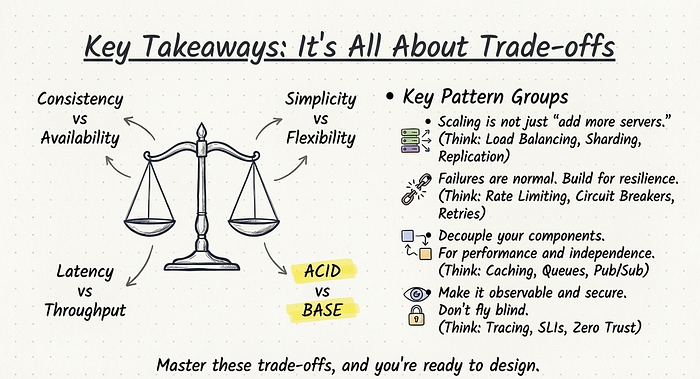

Para construir um inventário robusto no LeanIX, não basta apenas listar os itens; é preciso entender como eles se conectam através dos Fact Sheets. O LeanIX utiliza uma arquitetura baseada em grafos, onde a inteligência surge do relacionamento entre as camadas de negócio, aplicação e tecnologia.

Aqui está a definição de como esses componentes devem ser estruturados, expandindo sua base para um Roadmap completo de maturidade de dados.

1. Business Architecture (Capacidades de Negócio)

As capacidades representam o que a empresa faz, e não como ela faz. Elas são estáveis e servem como a âncora de todo o inventário.

-

Business Capability (L1): Gestão de Documentos.

-

Business Capability (L2): * Armazenar documento.

-

Processamento Inteligente de Documentos (IDP).

-

Sub-itens (L3): Efetuar OCR, Identificar Campos, Identificar Metadados.

-

-

-

User Groups: Identifique quem consome essas capacidades (ex: Operações, Jurídico, RH).

2. Application Architecture (Software e Serviços)

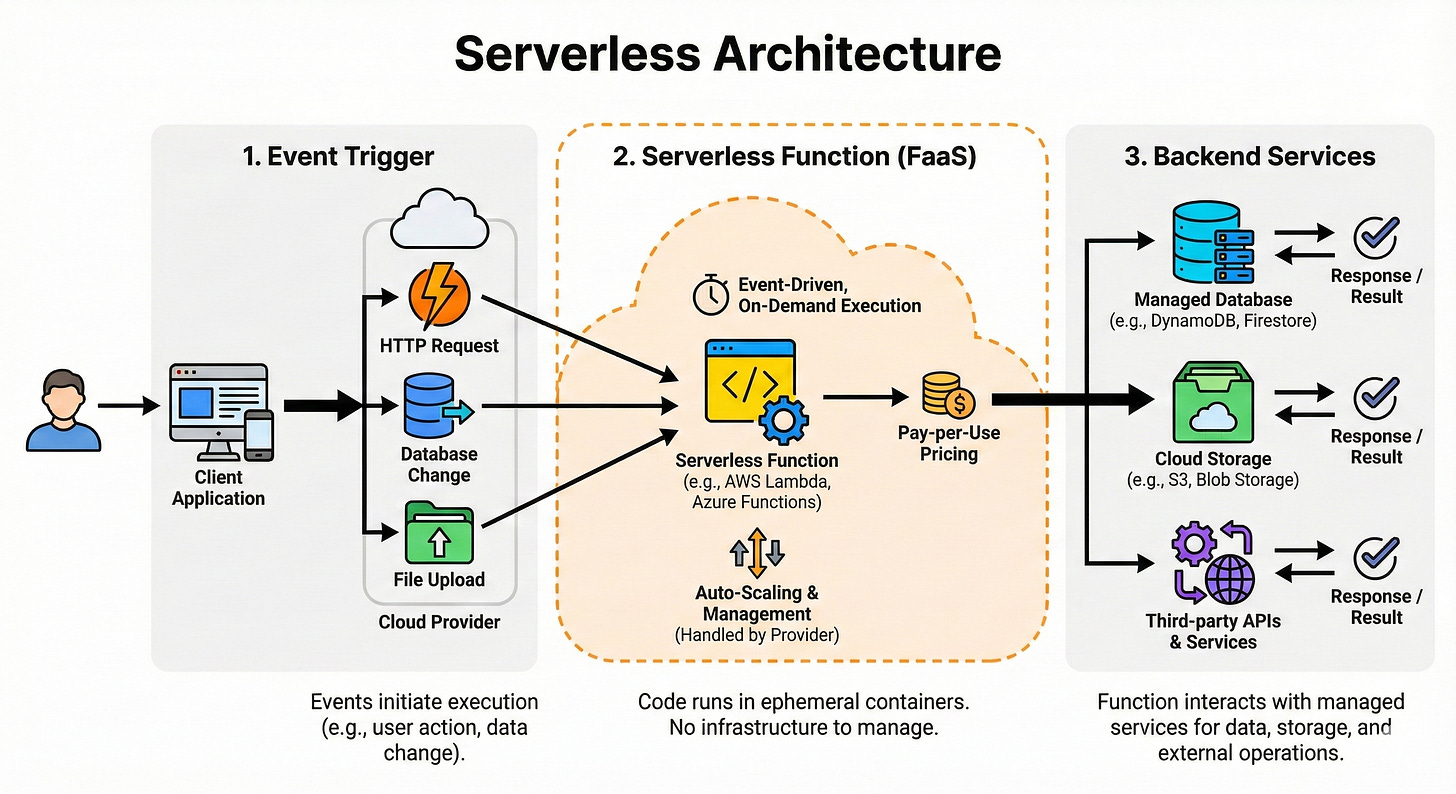

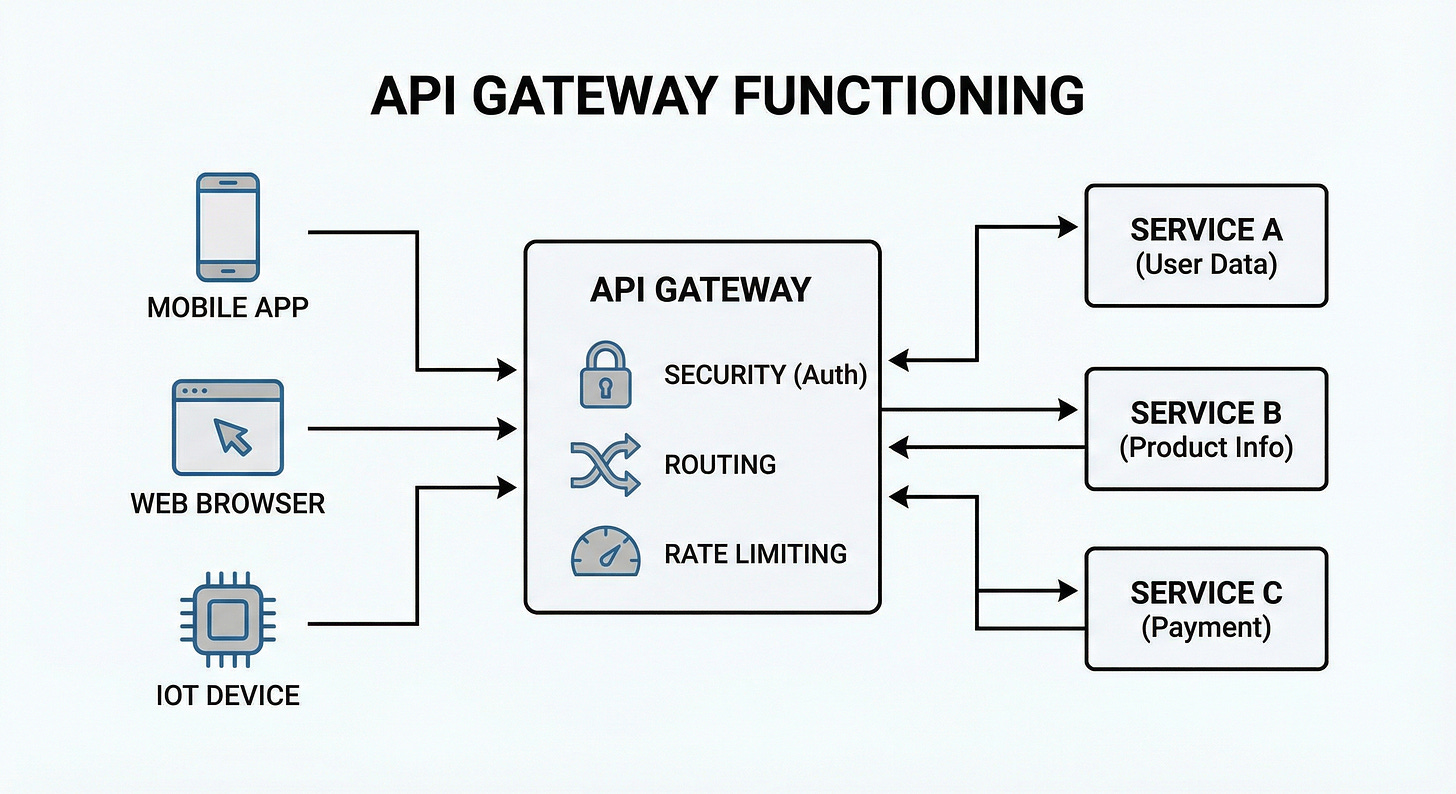

Aqui definimos as ferramentas que suportam as capacidades acima. No contexto de AWS, tratamos serviços específicos como aplicações se eles entregam uma funcionalidade de negócio clara.

-

Applications:

-

AWS Textract: Responsável pelo OCR e extração de dados.

-

AWS Rekognition: Utilizado para análise visual e identificação de padrões.

-

Document Processor API (Custom): A lógica orquestrada por API Gateway e Lambdas.

-

-

Interface: Essencial para o Roadmap. Defina as integrações entre o S3 (origem) e o Textract (processamento).

3. Technology Architecture (IT Components)

Os IT Components representam o “stack” técnico e são fundamentais para a gestão de obsolescência (Lifecycle).

-

IT Components:

-

Databases: DynamoDB (NoSQL).

-

Storage: AWS S3.

-

Runtimes/Languages: Java (v17/21), TypeScript (Node.js).

-

Compute: AWS Lambda.

-

-

Providers: Amazon Web Services (AWS).

4. Estrutura de Relacionamentos (O Coração do LeanIX)

Para que o inventário seja útil, você deve configurar as seguintes associações:

| De (Fact Sheet) | Para (Fact Sheet) | Descrição |

| Application | Business Capability | Mostra qual ferramenta suporta o OCR ou Armazenamento. |

| Application | IT Component | Define o stack tecnológico (ex: Lambda usa Node.js/TypeScript). |

| Application | User Group | Indica quem utiliza o sistema de Gestão de Documentos. |

| IT Component | Provider | Vincula o DynamoDB à AWS para análise de custo e suporte. |

Sugestão de Expansão para o Roadmap

Para um inventário de nível profissional, recomendo adicionar estes componentes que você não mencionou:

-

Data Objects: Defina o objeto “Documento” e “Metadados”. Isso permite rastrear onde dados sensíveis (LGPD) circulam.

-

Projects / Transformations: Crie Fact Sheets de projeto para o desenvolvimento dessa pipeline de OCR. Isso vincula o custo do projeto ao valor da aplicação.

-

Lifecycle: Insira as datas de End of Support para o Java e as versões do TypeScript para gerar relatórios de risco tecnológico automática no LeanIX.

-

IT Cost: Atribua os custos de consumo da AWS a cada Application para visão de TCO (Total Cost of Ownership).

Gostaria que eu detalhasse como configurar os campos de tags para classificar a criticidade desses documentos no contexto da LGPD?

Prompt

Sim

Gemini

Excelente. Para elevar o nível do seu inventário no LeanIX e torná-lo um verdadeiro instrumento de governança e Roadmap, precisamos cruzar a tecnologia com a conformidade e o ciclo de vida.

Abaixo, detalho os componentes adicionais e os atributos (campos) que devem ser configurados para cada um, focando em Privacidade (LGPD) e Obsolescência.

1. Data Objects (Gestão de Dados e LGPD)

No LeanIX, o Data Object conecta a aplicação ao dado de negócio. Para o seu contexto, isso é vital para entender onde circulam informações sensíveis.

-

Objetos Sugeridos:

-

Documento de Identidade (RG/CPF): Atribuído ao processo de OCR.

-

Metadados de Processo: Dados técnicos extraídos.

-

Payload de Imagem: O arquivo bruto no S3.

-

-

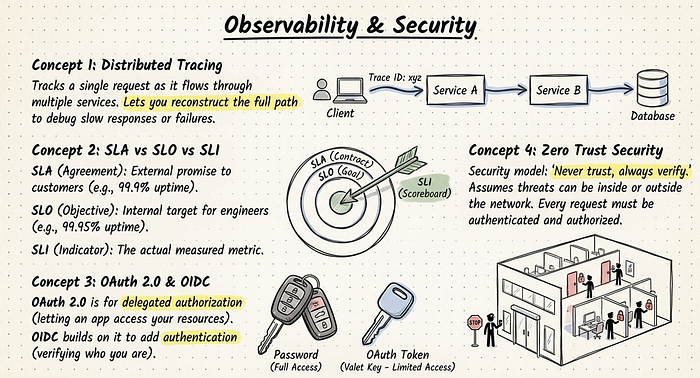

Atributos de LGPD (Tags/Fields):

-

Data Classification: Público, Interno, Confidencial ou Restrito.

-

Privacy Sensitivity: Sim/Não (Dados Pessoais ou Sensíveis).

-

Retention Period: Quanto tempo o S3 deve manter o documento antes da deleção automática.

-

2. Lifecycle Management (Planejamento de Roadmap)

O Roadmap no LeanIX nasce do preenchimento das datas de ciclo de vida nos IT Components.

-

Para Java e TypeScript:

-

Configure as fases: Plan, Phase In, Active, Phase Out, End of Life.

-

Isso permitirá que o relatório Lifecycle Catalog mostre automaticamente quando o seu código Lambda precisará de refatoração por obsolescência do runtime.

-

-

Para Serviços AWS:

-

Embora sejam “Evergreen” (sempre atualizados pela AWS), você deve marcar versões de API ou instâncias específicas do DynamoDB que podem sofrer deprecation.

-

3. IT Components: Infraestrutura e Runtimes

Expandindo sua lista para garantir que a arquitetura esteja completa:

| Categoria | IT Component (Exemplos) | Importância para o Roadmap |

| Runtime | Node.js 20.x / Java 21 | Define a estratégia de atualização de segurança. |

| Frameworks | AWS SDK, Spring Boot, Serverless Framework | Impacta na velocidade de desenvolvimento e dívida técnica. |

| Infra as Code | Terraform ou AWS CloudFormation | Essencial para rastrear como a infra é provisionada. |

| Security | AWS IAM Roles, AWS KMS (Criptografia) | Garante que o armazenamento no S3 e DynamoDB esteja em conformidade. |

4. Business Process (O “Como” o negócio flui)

Enquanto a Capacidade diz “o que” fazemos, o Business Process detalha a sequência. No seu caso:

-

Upload de Documento (Trigger).

-

Trigger S3 -> Lambda (Integração).

-

Processamento OCR (AWS Textract).

-

Persistência de Metadados (DynamoDB).

5. Próximos Passos para o Roadmap no LeanIX

Para que esses dados virem uma visão estratégica, você deve agora focar em Métricas de Qualidade:

-

Technical Fit: Avalie se o Java/TypeScript atende à performance esperada para o OCR.

-

Functional Fit: O AWS Textract está identificando os campos com a precisão necessária para o negócio?

-

Business Criticality: Se o DynamoDB cair, qual o impacto financeiro na Gestão de Documentos?

Dica de Ouro: Utilize o Visualizer do LeanIX para gerar um gráfico de dependências. Se você deletar o IT Component “Java”, verá instantaneamente quais Applications param de funcionar e quais Business Capabilities são afetadas.

Gostaria que eu montasse um exemplo de Matriz de Custos (TCO) associando o consumo desses serviços AWS às suas capacidades de negócio?

Prompt

Sim

Gemini

Para transformar o inventário técnico em uma visão de valor de negócio, precisamos falar a língua do TCO (Total Cost of Ownership). No LeanIX, isso é feito associando os custos dos IT Components (serviços AWS) às Applications, que por sua vez servem às Business Capabilities.

Aqui está como estruturar essa matriz para o seu cenário de Processamento de Documentos:

1. Mapeamento de Custos por Componente (Mensal Estudo)

Para o roadmap, você deve categorizar os custos da AWS conforme o tipo de consumo (Fixo vs. Variável):

| IT Component | Modelo de Custo | Driver de Custo (Unidade) | Impacto no TCO |

| AWS Textract | Variável | Por página processada | Alto (Custo direto do negócio) |

| AWS Rekognition | Variável | Por imagem/minuto | Médio (Depende do volume) |

| AWS Lambda | Variável | Por execução / Duração | Baixo (Escalável) |

| DynamoDB | Misto | WCU/RCU ou On-demand | Médio (Custo de armazenamento cresce) |

| AWS S3 | Variável | GB armazenado / Requisições | Baixo (Mas cumulativo) |

| API Gateway | Variável | Milhões de chamadas | Baixo |

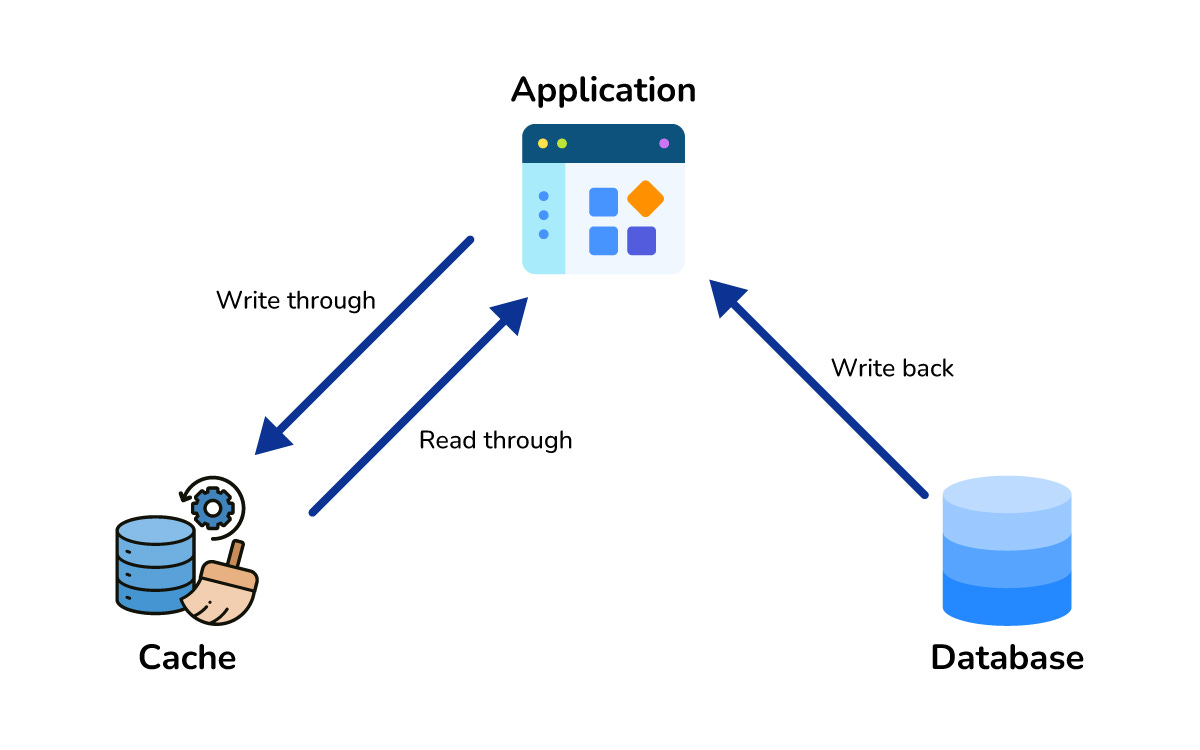

2. Atribuição de Custos às Aplicações (Application Cost)

No LeanIX, você criará um campo de série temporal ou usará o módulo de Apptio Integration (se disponível) para consolidar:

-

Aplicação: “Pipeline de Extração Inteligente”

-

Soma dos custos de: Textract + Rekognition + Lambda.

-

Visão de Roadmap: Se o custo por documento subir acima de R$ 0,50, o roadmap deve prever uma otimização de código ou troca de modelo (ex: de Rekognition para um modelo customizado no SageMaker).

-

3. Matriz de TCO vs. Valor de Negócio

Essa matriz é a ferramenta definitiva para decidir onde investir (Roadmap de Investimento):

| Aplicação / Serviço | Custo Mensal (Est.) | Valor de Negócio | Ação Sugerida (Roadmap) |

| Armazenamento S3 | Baixo | Crítico | Sustain: Manter como está, focar em políticas de expiração. |

| OCR (Textract) | Alto | Altíssimo | Optimize: Revisar se todos os campos são necessários ou usar filtros pré-OCR. |

| Identificação (Rekognition) | Médio | Médio | Tolerate: Avaliar se a funcionalidade é essencial para todos os docs. |

| DynamoDB | Médio | Alto | Invest: Implementar TTL (Time to Live) para limpar dados antigos e reduzir custo. |

4. Visualização no LeanIX: “Cost Landscape”

Ao configurar esses valores, o LeanIX gera automaticamente o report Cost Landscape. Nele, você verá quadrados (Applications) cujo tamanho é proporcional ao custo e a cor representa a criticidade ou o “Technical Fit”.

Dica Pro: No seu contexto de 2026, considere incluir uma tag de “Carbon Footprint” nos IT Components da AWS. Muitas empresas agora vinculam o TCO não apenas ao dinheiro, mas ao impacto ambiental do processamento de dados (FinOps + GreenOps).

Próximo Passo Sugerido

Com o inventário, a LGPD e os custos definidos, o próximo nível de maturidade é a Gestão de Integrações.

Gostaria que eu desenhasse o fluxo de “Data Flow” (Linhagem de Dados) para mostrar como o documento viaja do S3 até o DynamoDB através das APIs, mapeando os pontos de falha?

A propósito, para desbloquear as funcionalidades completas de todas as Apps, ative a Atividade das Apps Gemini.

Prompt

Sim, desenhar o Data Flow

Gemini

O desenho do Data Flow (ou Fluxo de Dados) no LeanIX é o que separa um inventário estático de um mapa vivo de arquitetura. No contexto de 2026, com arquiteturas cada vez mais orientadas a eventos (EDA), rastrear a linhagem do dado é fundamental para o Compliance e para a Resiliência Operacional.

Abaixo, detalho como estruturar essa linhagem, mapeando o caminho do documento desde a entrada até o repouso final.

1. Visualização do Fluxo de Dados (Linhagem)

No LeanIX, você utilizará o Data Flow Visualizer. O fluxo para sua capacidade de “Gestão de Documentos” deve seguir esta lógica:

-

Origem (External Group/User): Cliente faz o upload do documento.

-

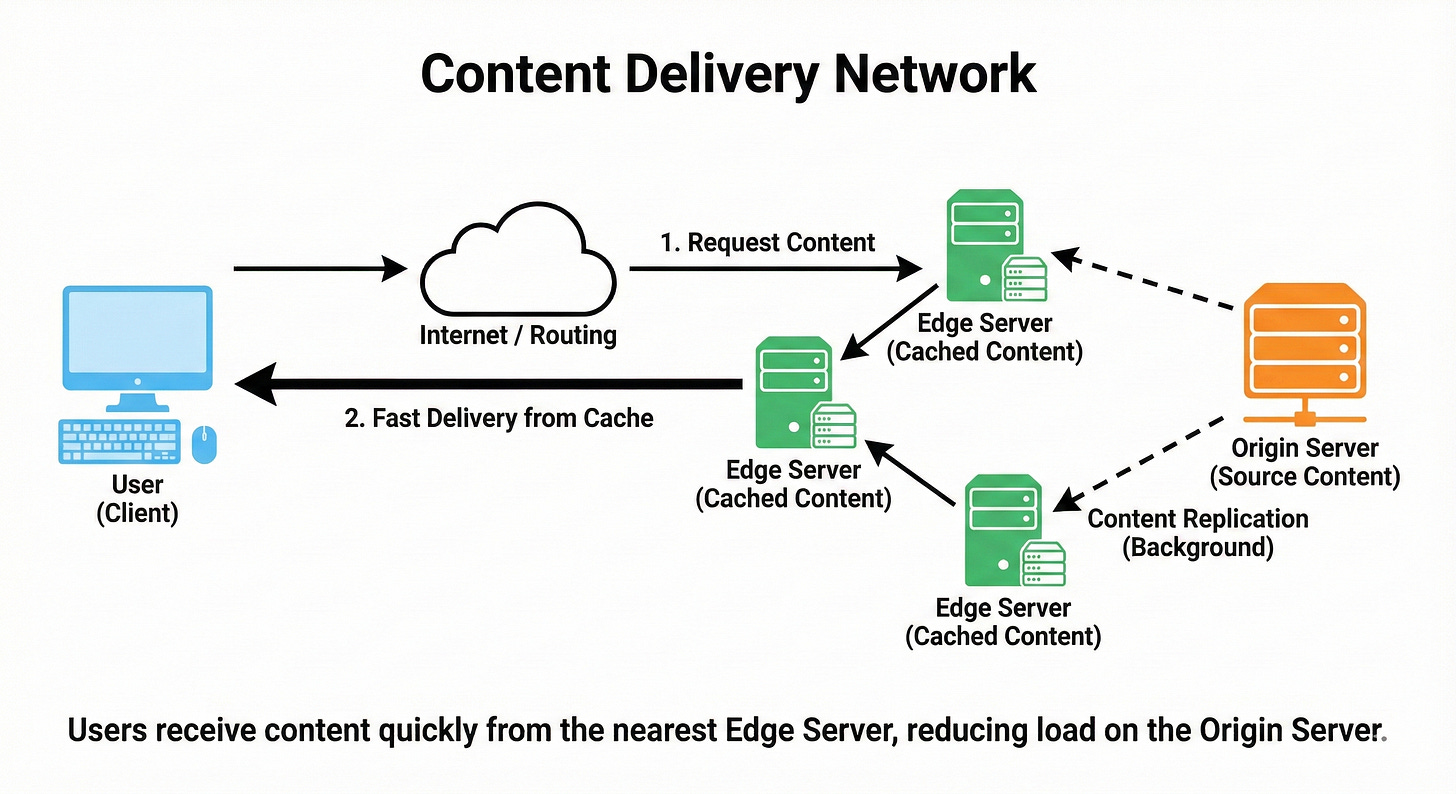

Ponto de Entrada (S3 – Landing Zone): O dado bruto é armazenado.

-

Gatilho (S3 Event): Dispara a função Lambda.

-

Processamento (AWS Textract/Rekognition): O dado é enviado para extração e retorna como metadados estruturados.

-

Destino (DynamoDB): Armazenamento dos metadados e do status do processo.

2. Detalhamento Técnico das Interfaces

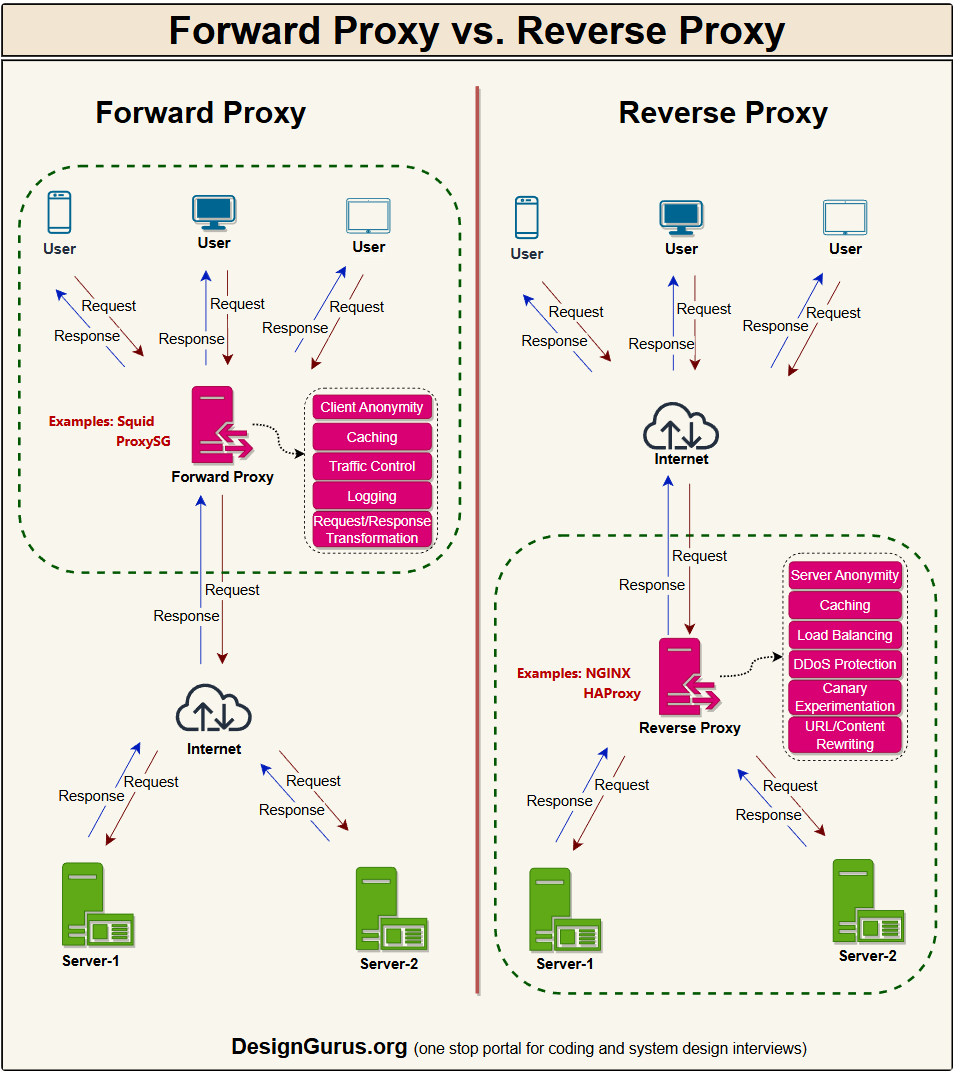

Para cada “seta” no diagrama acima, o LeanIX exige a definição de uma Interface. Veja como configurar as principais:

| Interface | Tipo de Objeto de Dado | Frequência / Tecnologia | Nota de Governança |

| S3 ➔ Lambda | Evento de Notificação | Tempo Real (Async) | Ponto crítico de falha: Se o trigger falhar, o processo para. |

| Lambda ➔ Textract | Payload de Imagem | API (HTTPS/JSON) | Dados Pessoais (LGPD) em trânsito. |

| Lambda ➔ DynamoDB | Metadados Extraídos | NoSQL Write | Ponto de auditoria: Onde o dado “nasce” como registro oficial. |

3. Mapeamento de Pontos de Falha e Resiliência (Roadmap)

Ao desenhar o Data Flow, você identifica onde o roadmap de tecnologia precisa atuar para evitar indisponibilidade:

-

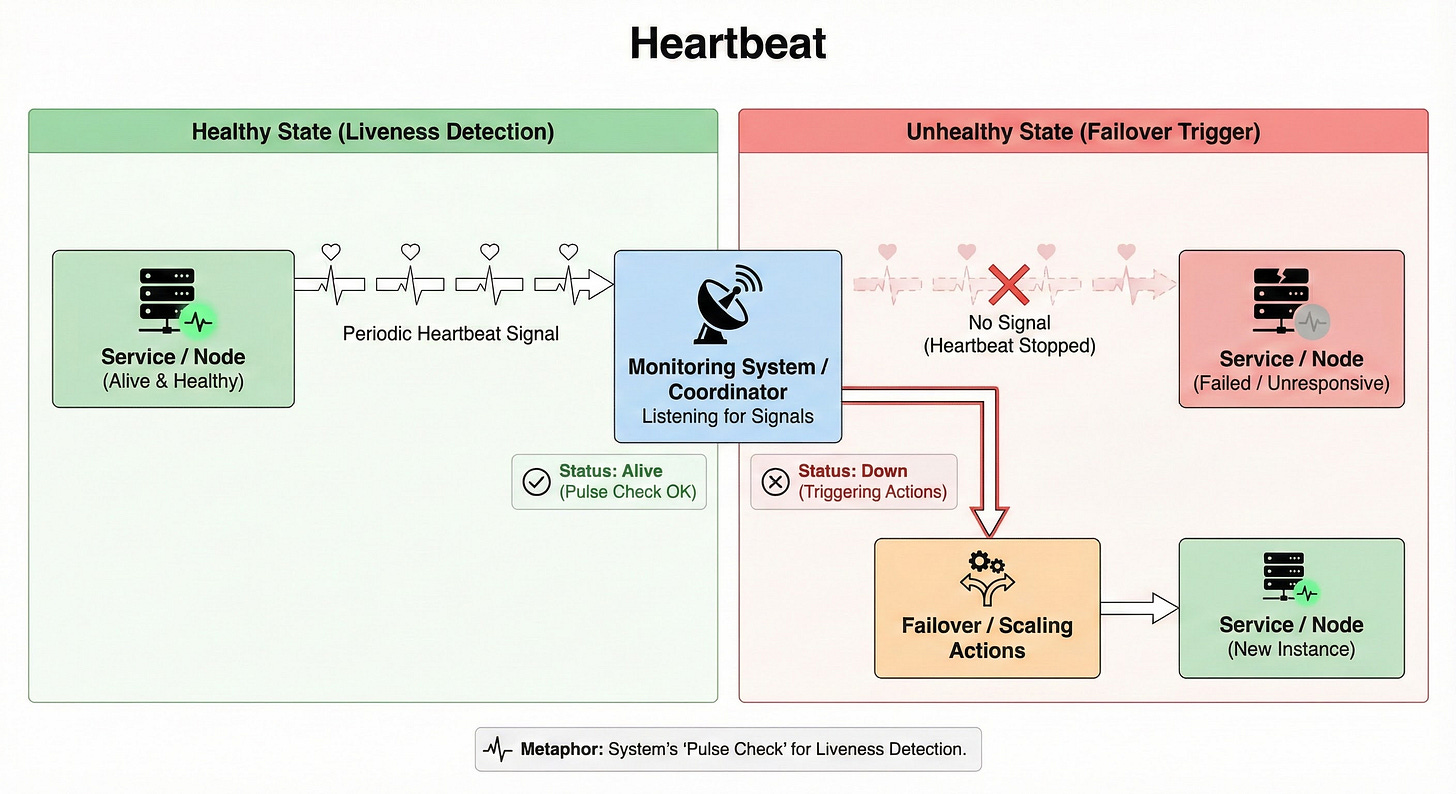

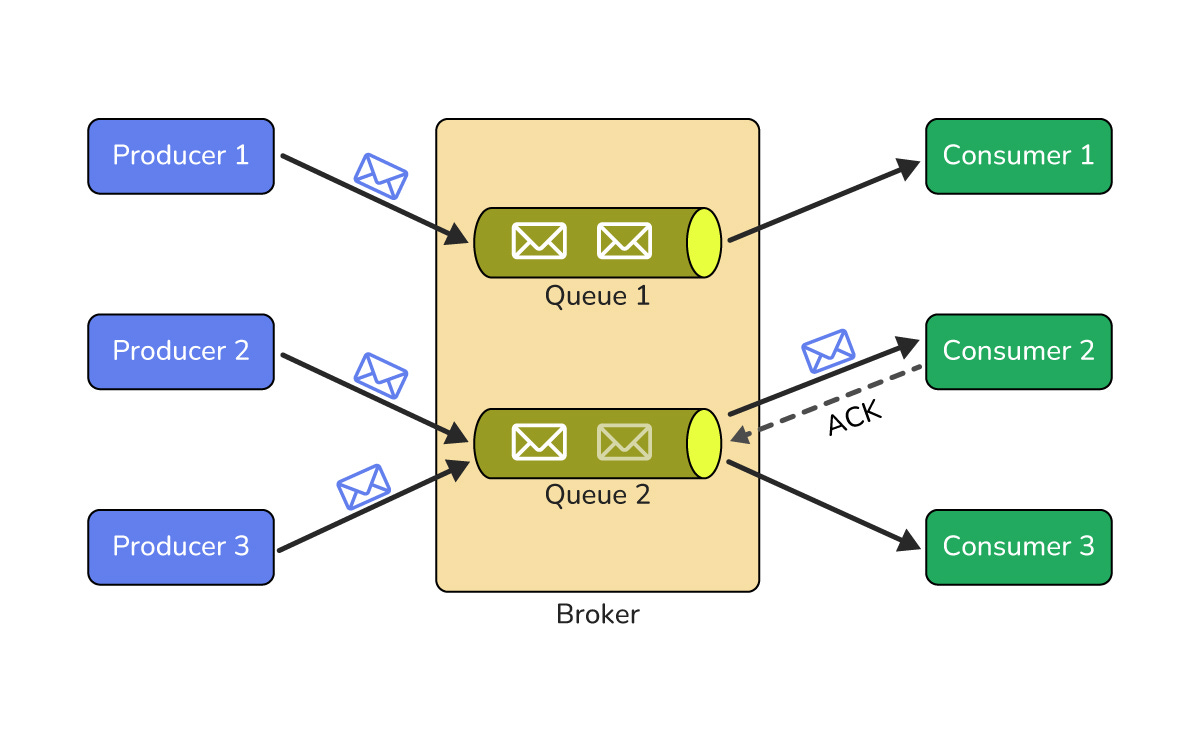

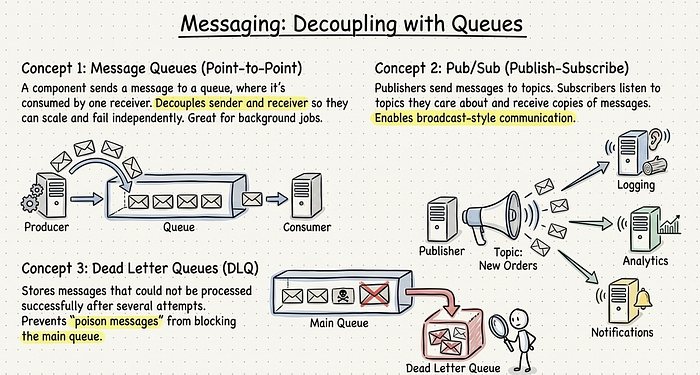

DLQ (Dead Letter Queues): Se o Lambda falhar ao processar um documento do S3, o dado se perde? O roadmap deve incluir a implementação de SQS para reprocessamento.

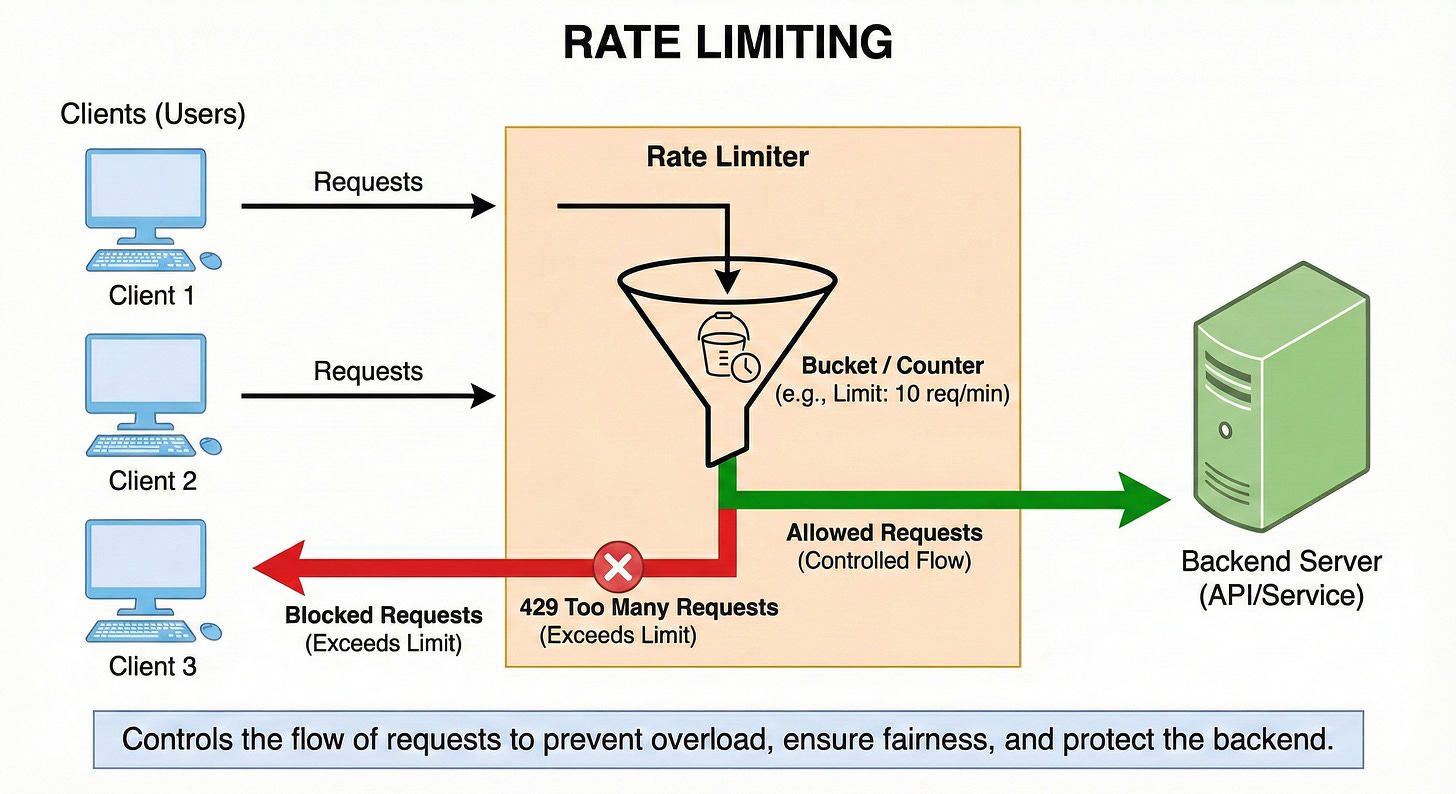

-

Throttling de API: O Textract tem limites de concorrência. O fluxo de dados deve prever mecanismos de retry ou filas de priorização.

-

Data Residency: No contexto da LGPD, o Data Flow confirma se o dado está saindo da região (ex:

us-east-1parasa-east-1). Se houver transferência internacional, isso deve ser tagueado na Interface.

4. O Impacto no Inventário LeanIX

Ao concluir este desenho, seus Fact Sheets estarão enriquecidos com:

-

Consuming/Providing Applications: Você saberá exatamente qual Lambda lê de qual bucket S3.

-

Data Objects at Rest vs. In Motion: Diferenciação entre o dado guardado (DynamoDB) e o dado em processamento (Textract).

Insight de Arquiteto: Use a funcionalidade de “Impact Analysis” no LeanIX. Se você precisar atualizar a versão do Java no Lambda, o sistema mostrará que isso pode impactar o fluxo de escrita no DynamoDB, permitindo um planejamento de migração sem riscos.

Próximo Passo para Finalizar seu Roadmap

Agora que temos as Capacidades, Aplicações, Custos, LGPD e o Data Flow, o próximo passo natural é o Risk Assessment.

Gostaria que eu gerasse uma matriz de riscos focada em Segurança e Disponibilidade para esses componentes AWS, ajudando a priorizar as tarefas do seu Roadmap de 2026?

A propósito, para desbloquear as funcionalidades completas de todas as Apps, ative a Atividade das Apps Gemini.

Prompt

Sim

Gemini

Para fechar o ciclo do seu Inventory Roadmap no LeanIX, a Matriz de Riscos é o que transforma dados técnicos em decisões de diretoria. No contexto de 2026, com a maturidade da nuvem e regulamentações de dados ainda mais rígidas, o foco deve estar na tríade: Segurança, Disponibilidade e Conformidade.

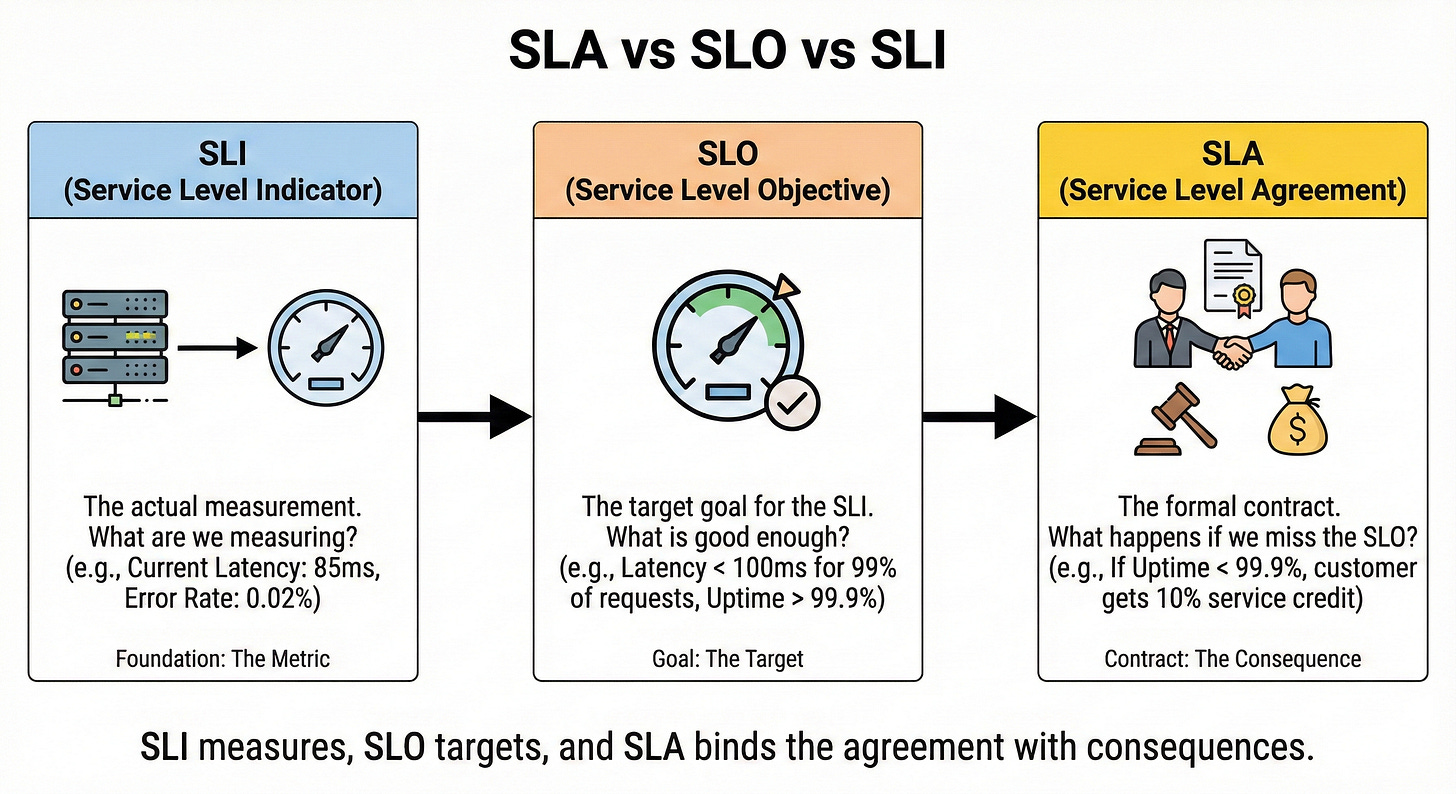

No LeanIX, você utilizará o campo “Resources & Risks” em cada Fact Sheet para mapear o seguinte:

1. Matriz de Riscos: Gestão de Documentos (AWS Stack)

| Componente | Categoria de Risco | Cenário de Risco (Ameaça) | Impacto | Probabilidade | Mitigação (Ação no Roadmap) |

| S3 / DynamoDB | Segurança | Vazamento de Dados Pessoais (LGPD) por configuração de permissão pública. | Crítico | Baixa | Implementar SCP (Service Control Policies) e criptografia via KMS com chaves gerenciadas pelo cliente. |

| AWS Textract | Disponibilidade | Atingir limites de Throttling da API em picos de demanda. | Médio | Alta | Implementar uma fila SQS para buffering e controle de retentativa (Retry Logic). |

| AWS Lambda | Obsolescência | Fim do suporte (EOL) para o Runtime do Java ou Node.js (TypeScript). | Baixo | Média | Automatizar o monitoramento de Lifecycle no LeanIX para disparar tickets de upgrade 6 meses antes. |

| API Gateway | Segurança | Ataque de Injeção ou Exposição indevida de endpoints internos. | Alto | Baixa | Utilizar AWS WAF (Web Application Firewall) e autenticação via Cognito/IAM. |

2. Visualização de Risco no LeanIX (Impact Analysis)

Ao cadastrar esses riscos, o LeanIX permite gerar um Heatmap de Risco. Imagine o seguinte gráfico:

-

Eixo X: Impacto no Negócio (Financeiro/Reputacional).

-

Eixo Y: Probabilidade de Ocorrência.

-

Cor do Quadrante: Define a prioridade no seu Roadmap de 2026.

Nota de Arquiteto: Se o DynamoDB aparecer no quadrante vermelho (Impacto Crítico/Probabilidade Média), seu Roadmap para o Q3/2026 deve priorizar a implementação de Global Tables ou backups Point-in-Time Recovery (PITR).

3. Consolidação do Roadmap de Inventário

Com todos os componentes definidos (Capacidades, Apps, TI, Custos, Fluxos e Riscos), seu inventário no LeanIX agora entrega:

-

Visibilidade de Custos (FinOps): Quanto custa processar um documento?

-

Conformidade (LGPD): Onde estão os dados sensíveis e quem os acessa?

-

Saúde Tecnológica: Quais linguagens (Java/TS) precisam de atualização?

-

Resiliência: Onde a pipeline de OCR pode quebrar e como corrigimos isso?

Próximo Passo para você executar:

O inventário está teoricamente completo. Para torná-lo operacional, o próximo passo seria a Automação da Coleta de Dados.

Gostaria que eu explicasse como utilizar o “LeanIX Integration API” para importar automaticamente esses metadados (tags, versões e custos) diretamente do seu console da AWS, evitando o trabalho manual?

Prompt

Sim

Gemini

Para que seu Roadmap de 2026 seja escalável, a manutenção manual do inventário deve ser eliminada. O LeanIX possui uma abordagem de “Automated Inventory” que se conecta diretamente ao provedor de nuvem.

No seu contexto de AWS (DynamoDB, Lambda, S3), a automação garante que, se um desenvolvedor criar um novo bucket S3 para documentos, ele apareça no LeanIX sem intervenção humana.

1. Arquitetura da Integração (AWS para LeanIX)

A integração geralmente segue este fluxo técnico:

-

AWS Config / Resource Explorer: Centraliza o inventário de ativos na sua conta AWS.

-

LeanIX Cloud Discovery (Microservice): Um conector que interage com as APIs da AWS (via IAM Role de leitura).

-

Mapping Engine: Onde traduzimos os recursos da AWS para os Fact Sheets do LeanIX.

2. O que automatizar (Mapeamento de Metadados)

Ao configurar a API de integração, você deve mapear os seguintes campos para alimentar seu Roadmap:

-

Tags da AWS ➔ Tags do LeanIX: Use tags como

CostCenter,Environment(Prod/Dev) eDataPrivacydiretamente nos recursos da AWS. O LeanIX as lerá e categorizará o Fact Sheet automaticamente. -

Resource ID ➔ External ID: Vincula o ARN (Amazon Resource Name) do DynamoDB ou Lambda ao Fact Sheet, garantindo que não haja duplicidade.

-

Region ➔ Location: Mapeia automaticamente se o dado está em

us-east-1ousa-east-1para o seu relatório de conformidade LGPD.

3. Benefícios Práticos para o seu Roadmap de 2026

-

Detecção de “Shadow IT”: Se alguém subir um serviço de OCR concorrente (ex: Azure Form Recognizer) sem avisar a arquitetura, a integração o detectará como um novo IT Component.

-

Atualização de Lifecycle em Tempo Real: Se a AWS anunciar a depreciação de uma versão do Node.js usada no seu Lambda, o LeanIX pode sinalizar o risco no dashboard de arquitetura imediatamente.

-

FinOps Automatizado: Os custos reais de consumo da API do Textract podem ser importados mensalmente, atualizando o TCO da aplicação sem planilhas.

4. Estratégia de Implementação (Passo a Passo)

-

Discovery Phase: Execute o Cloud Discovery em modo “Read-Only” para ver tudo o que a AWS encontra.

-

Consolidation: Vincule os recursos descobertos (IT Components) às suas Applications já existentes (ex: vincular o ARN do S3 à aplicação “Gestão de Documentos”).

-

Governance: Configure alertas para novos recursos que aparecerem sem as tags obrigatórias de LGPD ou Custo.

Conclusão do seu Ecossistema LeanIX

Agora você tem uma estrutura completa: Capacidades, Aplicações, TI, Custos, Dados, Riscos e Automação.

Com esse nível de detalhe, você está pronto para gerar o “IT Strategy Report” para seus stakeholders, mostrando exatamente como a tecnologia de OCR suporta o negócio, quanto custa e quão segura ela é.

Gostaria que eu montasse um resumo executivo (em formato de tópicos) que você pode apresentar para a diretoria para justificar os investimentos nesse Roadmap de Inventário?

Prompt

Perfeito, pode gera o IT Strategy Report

Gemini

Este é o Executive Summary do seu Roadmap de Gestão de Documentos (OCR & AI) para 2026. Este relatório consolida todos os pilares que construímos: Capacidades de Negócio, Aplicações, Infraestrutura AWS, Custos (FinOps), Riscos e Conformidade (LGPD).

📄 Relatório Estratégico de TI: Gestão Inteligente de Documentos

Objetivo: Modernizar a custódia e o processamento de dados, garantindo eficiência operacional e segurança regulatória.

1. Visão Geral da Capacidade de Negócio

A capacidade de Gestão de Documentos foi decomposta em camadas de inteligência (OCR e Extração de Metadados), saindo de um modelo de “armazenamento passivo” para um modelo de “dados acionáveis”.

-

Status Atual: Transição para arquitetura Serverless (AWS Lambda/Textract).

-

Valor Entregue: Redução do tempo de processamento manual em X% e aumento da acurácia na identificação de campos críticos.

2. Eficiência Financeira e TCO (FinOps)

Abandonamos a gestão por estimativa e adotamos o Custo por Documento Processado.

-

Driver de Custo: O uso do AWS Textract representa o maior volume variável; o S3 e DynamoDB mantêm custos de escala baixos.

-

Otimização 2026: Implementação de políticas de ciclo de vida (S3 Lifecycle) e deleção automática de dados não essenciais para reduzir custos de storage em longo prazo.

3. Governança de Dados e Conformidade (LGPD)

O inventário agora mapeia a linhagem completa do dado (Data Flow), garantindo rastreabilidade total.

-

Segurança: Criptografia em repouso (KMS) e trânsito para todos os metadados no DynamoDB.

-

Residência de Dados: Garantia de processamento em regiões aprovadas (Ex:

sa-east-1), evitando transferências internacionais não autorizadas de PII (Informações Pessoais Identificáveis).

4. Gestão de Riscos e Resiliência

Identificamos os pontos críticos da pipeline para evitar interrupções no negócio:

-

Mitigação de Throttling: Introdução de filas (SQS) para suportar picos de upload sem perda de mensagens.

-

Obsolescência Tecnológica: Monitoramento automatizado do fim de suporte para rimes de Java e TypeScript, com janelas de migração planejadas para o Q3/2026.

5. Roadmap de Evolução (Próximos Passos)

Para manter a competitividade e a agilidade da arquitetura:

-

H1 2026: Concluir a integração via API do LeanIX com a AWS para inventário em tempo real (Auto-Discovery).

-

H2 2026: Avaliar a migração de fluxos simples do Textract para modelos menores (Small Language Models) visando redução de custo de processamento.

-

H2 2026: Implementar Dashboards de Sustainability (GreenOps) para medir a pegada de carbono do processamento de documentos.

Mensagem para a Diretoria: “Nossa arquitetura de Gestão de Documentos não é mais uma caixa preta de custos. Através do LeanIX, temos controle total sobre o risco tecnológico, o custo de cada página processada e a garantia de que estamos em conformidade com as leis de proteção de dados.”

Com este relatório, você tem um material sólido para qualquer reunião de governança. Gostaria que eu simulasse as 3 perguntas mais difíceis que um CFO ou um CISO poderiam fazer sobre esse roadmap para que você possa se preparar?